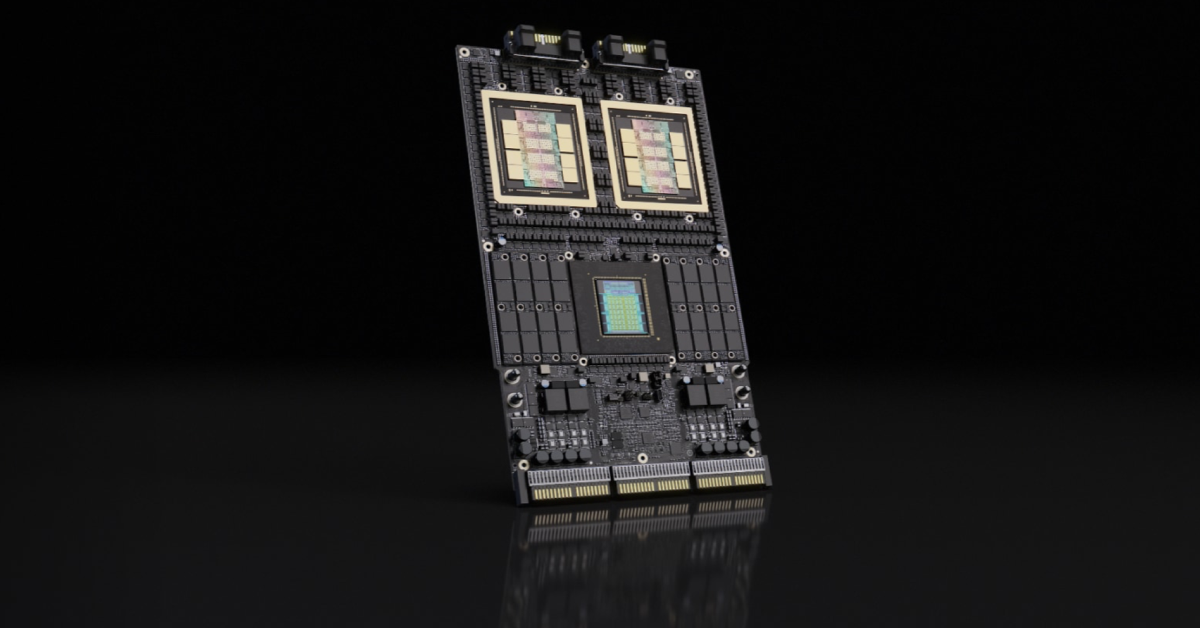

SK hynix starts mass production of 192GB SOCAMM2 for NVIDIA AI servers

If you think AI progress is all about GPUs, you are missing half the story. Memory is quickly becoming the real choke point, and SK hynix seems eager to cash in on that. The company says it has kicked off mass production of a 192GB SOCAMM2 module built on its latest 1cnm LPDDR5X DRAM. That … Read more