OpenAI is rolling out a new ChatGPT feature called Trusted Contact, and folks are probably going to have strong opinions about it.

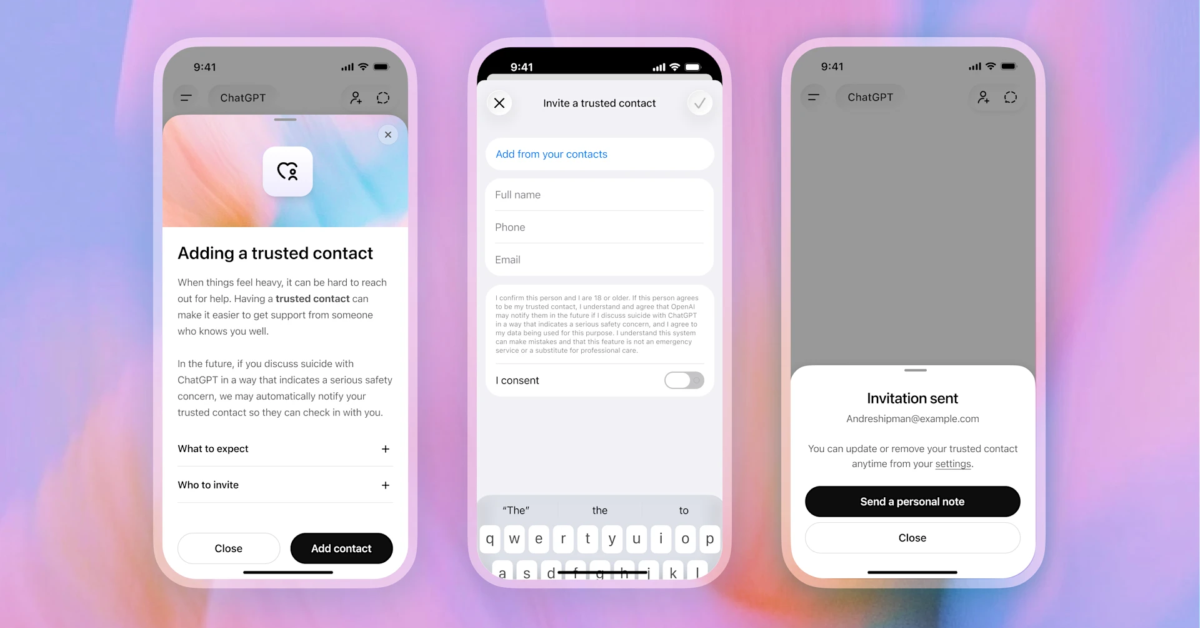

The optional tool allows adults to nominate a trusted person, such as a family member, close friend, or caregiver, who could be notified if ChatGPT believes the user may be at serious risk of self-harm. According to OpenAI, the system combines automated detection with human review before any alert is sent.

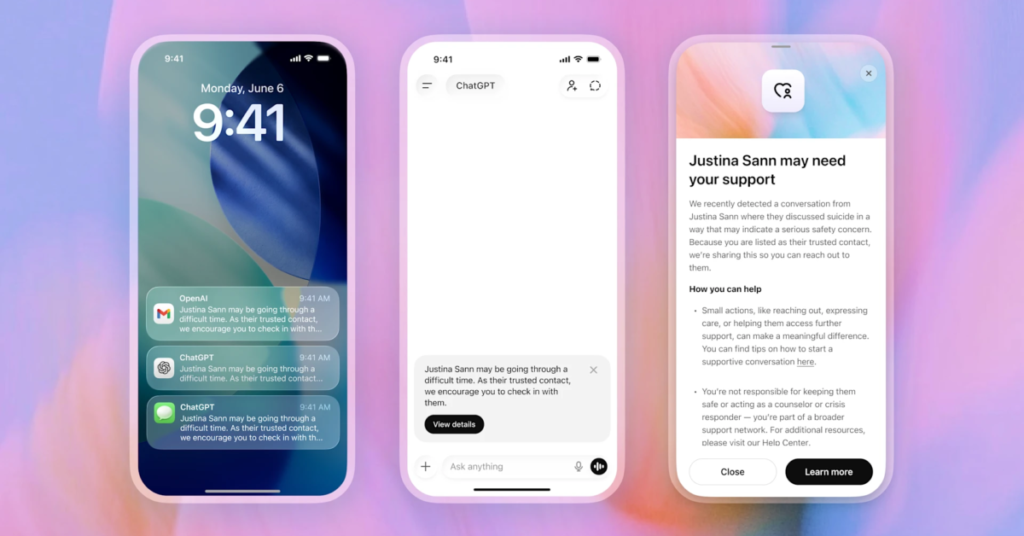

In other words, if someone using ChatGPT says things that raise serious concern, OpenAI may contact the person the user selected ahead of time. The company says the notifications are intentionally vague and won’t include private chat transcripts or detailed conversation history. Instead, the trusted person would simply get a message suggesting the user may need support.

Predictably, this is the kind of announcement that’s going to make some people deeply uncomfortable.

On one hand, there’s a reasonable argument that this feature could help save lives. People already use AI chatbots for emotional support, venting, and deeply personal conversations. If somebody is spiraling and has nobody around, even a small intervention could matter.

On the other hand, this raises some pretty serious privacy questions too. Even though the feature is opt-in, some users are not going to love the idea of AI systems monitoring conversations closely enough to potentially trigger a human escalation process. False positives are also inevitable. Human emotions are messy, sarcastic, emotional, exaggerated, and sometimes dramatic. AI does not always understand nuance the way actual people do.

OpenAI says Trusted Contact works in several stages. First, the user must manually add a trusted adult inside ChatGPT settings. That person then has to accept the invitation before the feature becomes active. If OpenAI’s systems later detect what it considers a potentially dangerous self-harm discussion, ChatGPT warns the user that their Trusted Contact may be notified and encourages them to reach out themselves first.

After that, specially trained reviewers evaluate the situation. If they believe the threat could be serious, OpenAI may send a notification through email, text message, or the ChatGPT app.

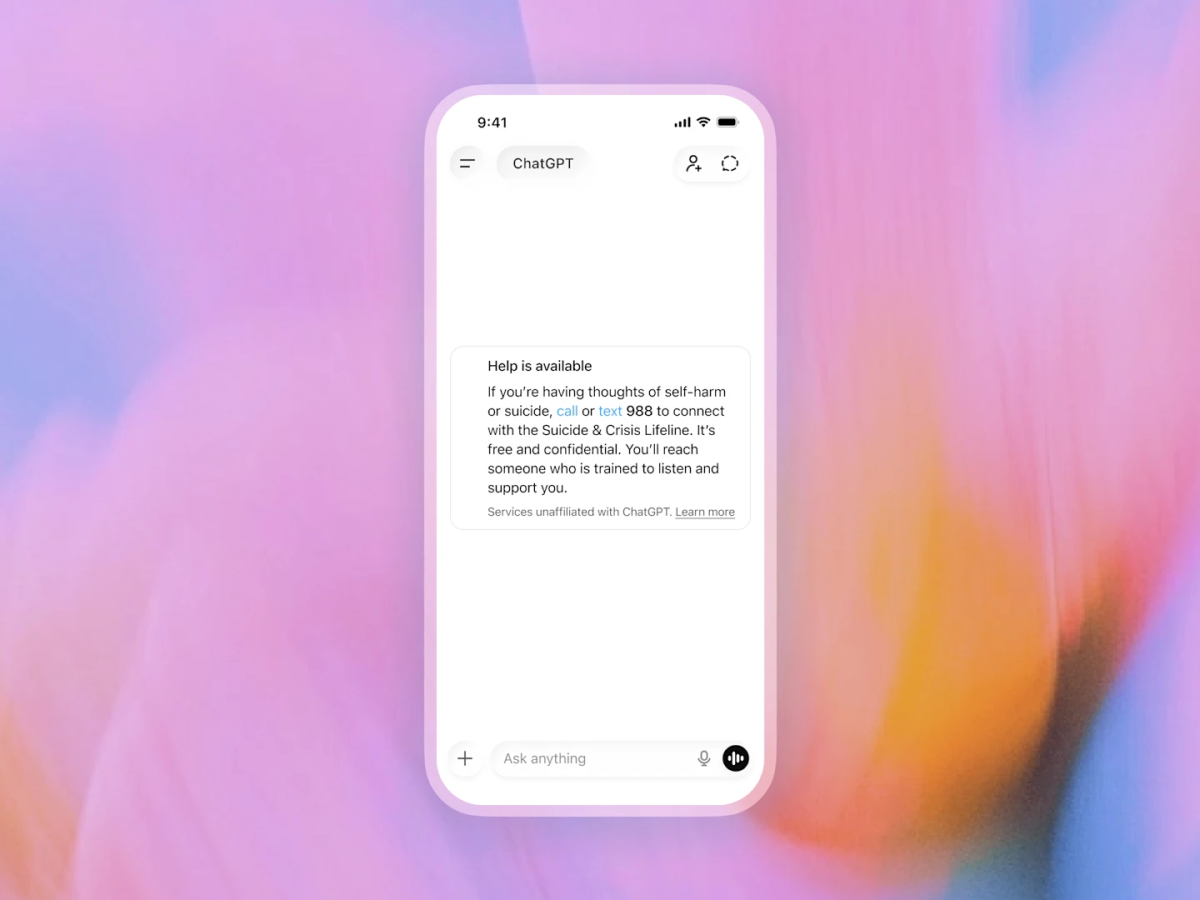

The company says it worked with mental health professionals, suicide prevention experts, and organizations like the American Psychological Association while developing the feature. OpenAI also says ChatGPT continues to refuse requests involving suicide instructions or self-harm guidance.

This all comes as AI chatbots continue drifting into territory that once belonged almost entirely to therapists, counselors, and human support systems. That evolution has been happening quietly for a while now, but Trusted Contact makes it impossible to ignore.

Some users will probably welcome the extra safety layer. Others may see it as one more reason not to share deeply personal thoughts with AI systems at all.

Either way, OpenAI clearly believes ChatGPT is becoming more than just a chatbot. Whether that’s comforting or unsettling likely depends on who you ask.