Cadence just pulled the curtain back on something that could positively alter the future of AI hardware. The company has taped out the first complete LPDDR6 and LPDDR5X memory IP system. That’s a big milestone, especially for chipmakers working on AI inference, large language models, and other high-bandwidth workloads.

This new system hits speeds up to 14.4Gbps. That’s a major jump over previous generations and exactly the kind of bandwidth upgrade AI hardware needs right now. When you’re feeding massive neural networks or running agentic AI at scale, slow memory can be the bottleneck. Cadence is offering a way to break through that wall.

To be clear, this isn’t a standalone chip. It’s a full IP package that other companies can license and drop into their own processors. That includes a physical interface, a memory controller, and a full verification setup so engineers can make sure everything works before fabrication.

What makes this release even more interesting is how flexible it is. It works in both traditional system-on-chip designs and newer chiplet-based architectures. That’s important because a lot of modern hardware is moving away from big monolithic chips in favor of modular, multi-die systems. Cadence built this LPDDR6 platform to slot into either approach.

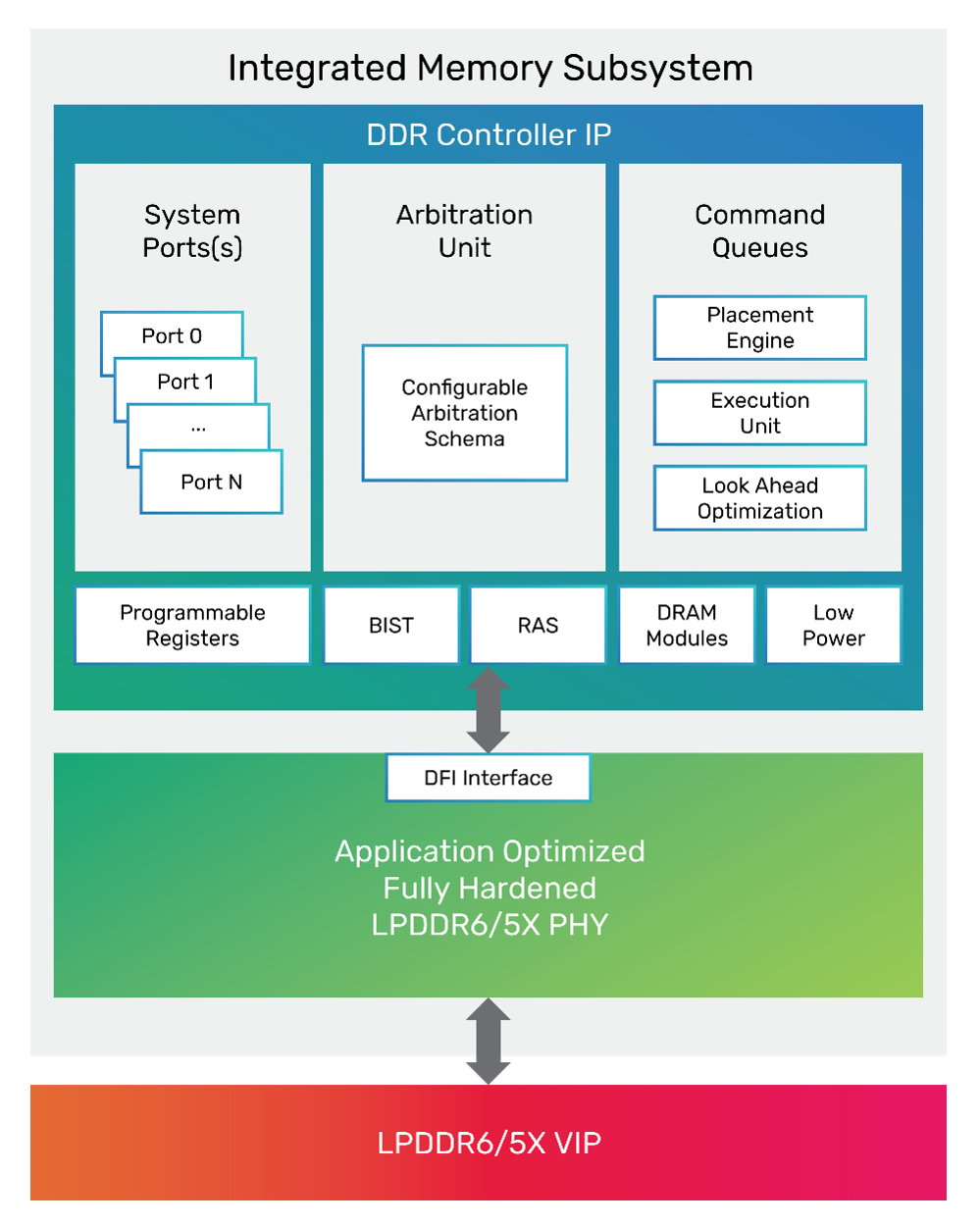

The memory controller is provided as soft RTL, so it can be customized depending on what matters most in the final chip. If you need to prioritize power efficiency, performance, or footprint, you have the option to tune it. The physical layer, on the other hand, comes as a hardened macro, making it easier to plug in and tape out quickly.

This IP also supports LPDDR5X CAMM2, which is starting to show up in newer laptops and high-end mobile gear. That opens up a range of potential uses beyond just data centers. AI workloads at the edge, gaming handhelds, and even next-gen phones could benefit from this tech.

Cadence says several companies are already engaged with the IP, including those working in AI, high-performance computing, and cloud infrastructure. They didn’t name names, but it’s not hard to guess who might be interested. Anyone building chips for AI inference at scale is going to be looking closely at LPDDR6.

Ultimately, Cadence isn’t just chasing higher speeds for the sake of it. This LPDDR6 platform reflects how chip design is evolving. AI hardware is pushing memory harder than ever, and with chiplets quickly becoming the new normal, having a flexible, fast, and power-efficient memory interface is no longer optional.